Natural Language Processing

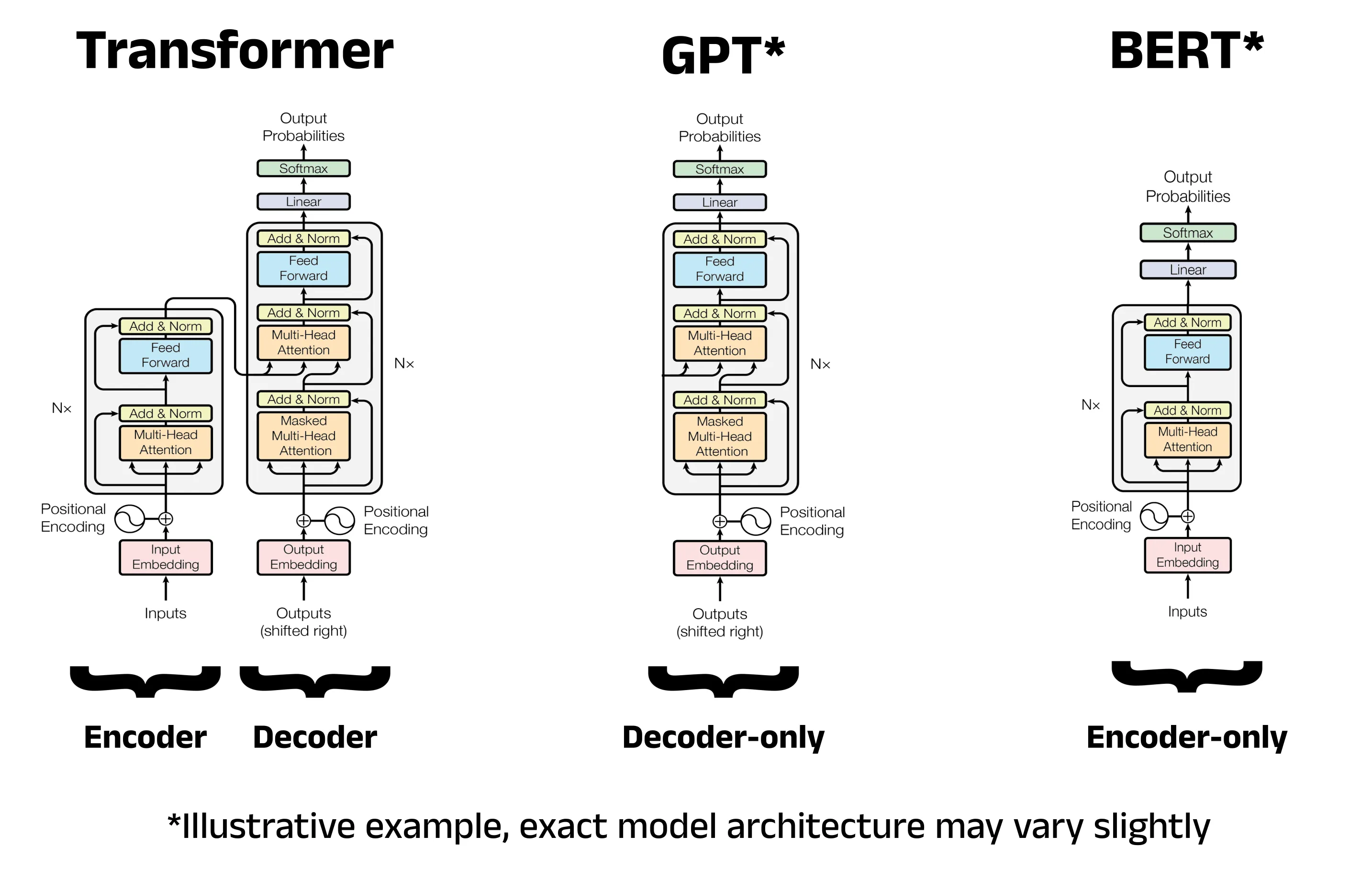

Bert-TweetEval: Natural Language Classification Bidirectional Encoder Representations from Transformers (BERT) are an encoder-transformer based architecture that is more suitable for some transformer tasks, such as classifying natural language. March 5, 2026

Computer Vision

ResNet10 ImageNet Classification on the MLA-100 NPU We train and compile a ResNet10 classification model on an ImageNet subset. We then quantize and run inference on the Mobilint MLA100, an energy-efficient NPU. March 1, 2026

SLMs

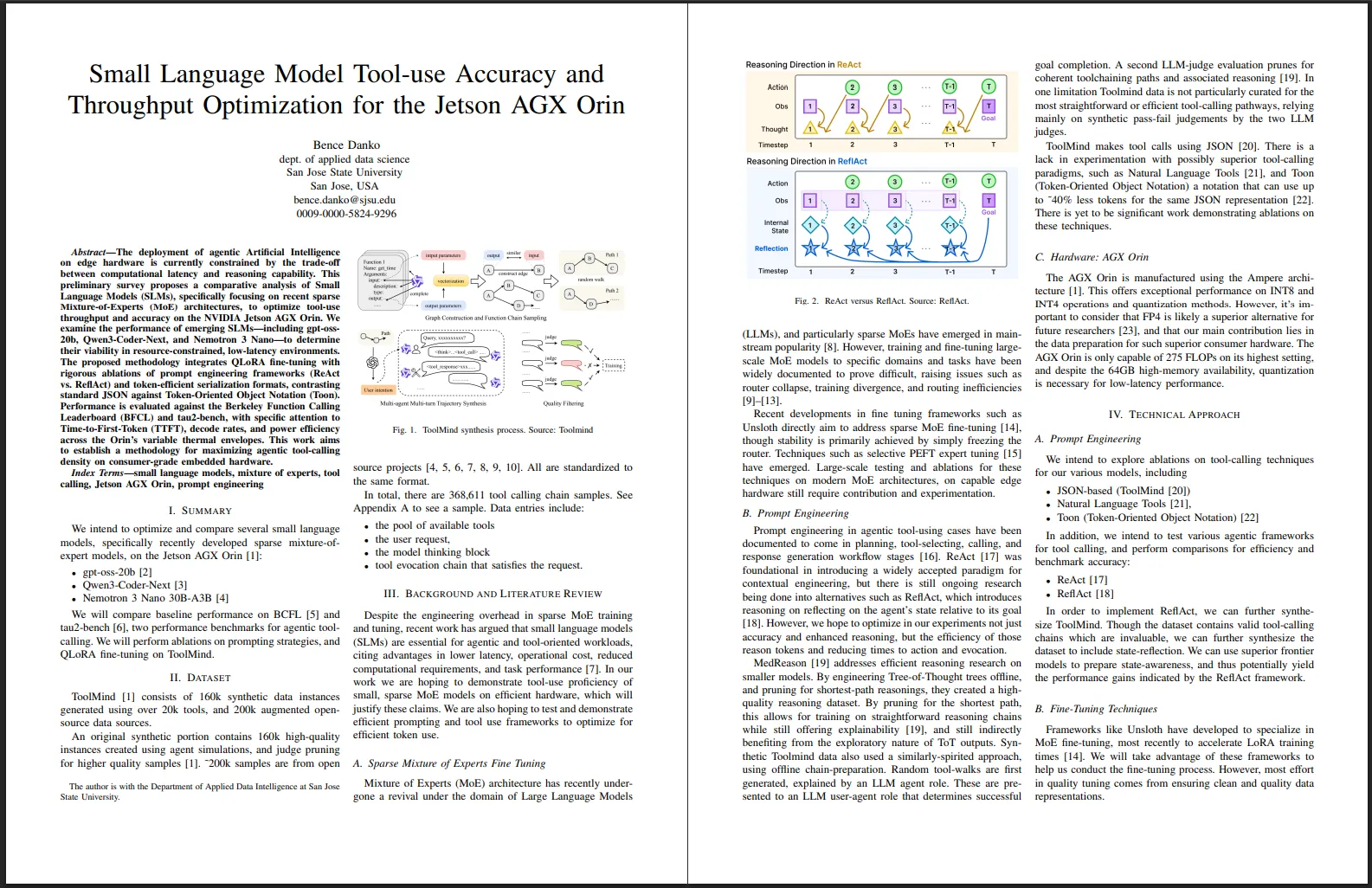

Small Language Model Tool-use Accuracy and Throughput Optimization for the Jetson AGX Orin A brief survey and motivation for optimizing low-latency tool-use throughput on the Jetson AGX Orin. February 14, 2026