Small Language Model Tool-use Accuracy and Throughput Optimization for the Jetson AGX Orin

PDF

Abstract

The deployment of agentic Artificial Intelligence on edge hardware is currently constrained by the trade-off between computational latency and reasoning capability. This preliminary survey proposes a comparative analysis of Small Language Models (SLMs), specifically focusing on recent sparse Mixture-of-Experts (MoE) architectures, to optimize tool-use throughput and accuracy on the NVIDIA Jetson AGX Orin. We examine the performance of emerging SLMs—including gpt-oss-20b, Qwen3-Coder-Next, and Nemotron 3 Nano—to determine their viability in resource-constrained, low-latency environments. The proposed methodology integrates QLoRA fine-tuning with rigorous ablations of prompt engineering frameworks (ReAct vs. ReflAct) and token-efficient serialization formats, contrasting standard JSON against Token-Oriented Object Notation (Toon). Performance is evaluated against the Berkeley Function Calling Leaderboard (BFCL) and tau2-bench, with specific attention to Time-to-First-Token (TTFT), decode rates, and power efficiency across the Orin’s variable thermal envelopes. This work aims to establish a methodology for maximizing agentic tool-calling density on consumer-grade embedded hardware.

Summary

We intend to optimize and compare several small language models, specifically recently developed sparse mixture-of-expert models, on the Jetson AGX Orin [1]:

We will compare baseline performance on BFCL [5] and tau2-bench [6], two performance benchmarks for agentic tool-calling. We will perform ablations on prompting strategies, and QLoRA fine-tuning on ToolMind.

Dataset

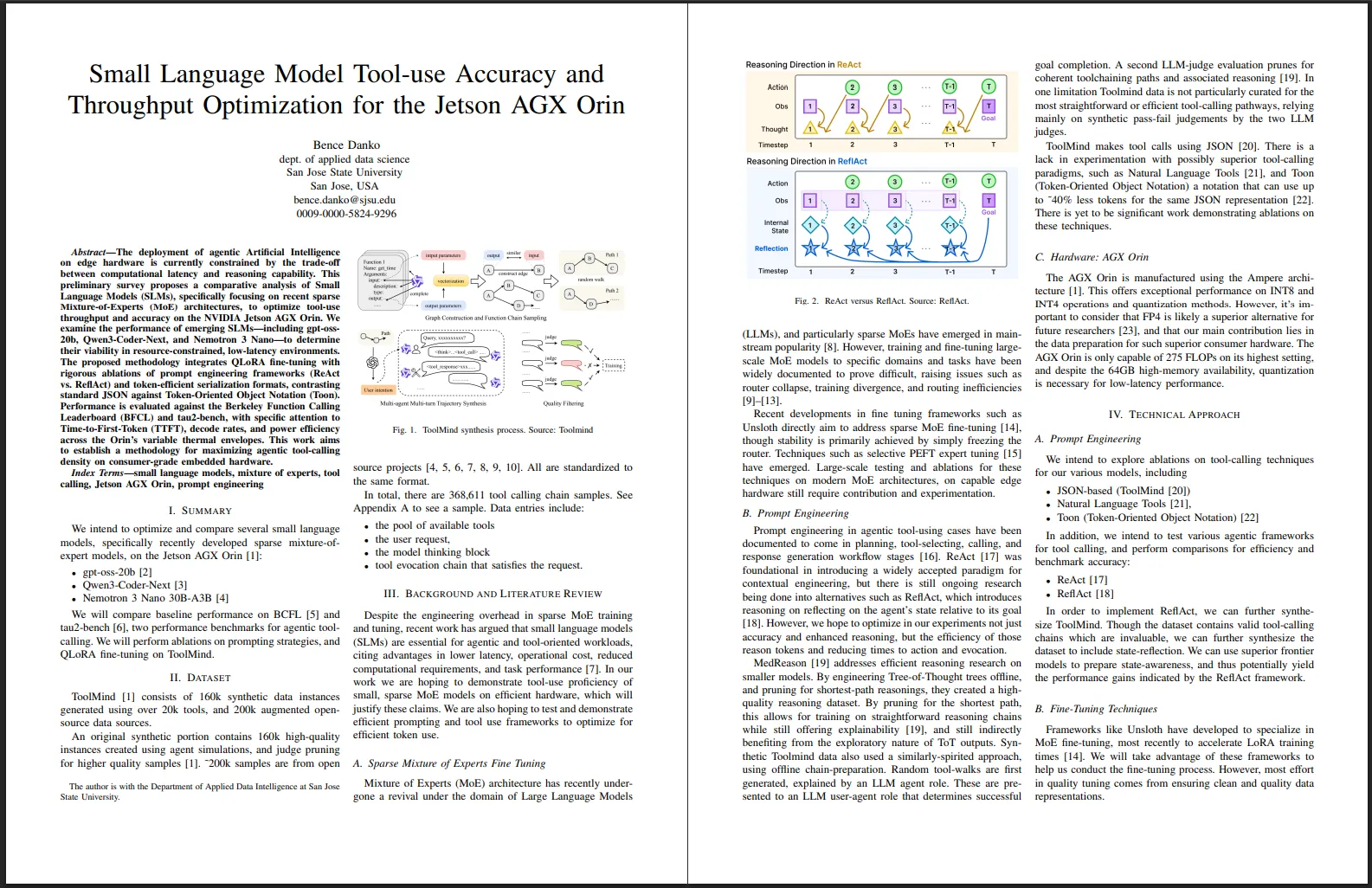

ToolMind [7] consists of 160k synthetic data instances generated using over 20k tools, and 200k augmented open-source data sources.

An original synthetic portion contains 160k high-quality instances created using agent simulations, and judge pruning for higher quality samples [7]. ~200k samples are from open source projects [8], [9], [10], [11], [12], [13], [14]. All are standardized to the same format.

In total, there are 368,611 tool calling chain samples. See Appendix A to see a sample. Data entries include:

- the pool of available tools

- the user request,

- the model thinking block

- tool evocation chain that satisfies the request.

Background and Literature Review

Despite the engineering overhead in sparse MoE training and tuning, recent work has argued that small language models (SLMs) are essential for agentic and tool-oriented workloads, citing advantages in lower latency, operational cost, reduced computational requirements, and task performance [15]. In our work we are hoping to demonstrate tool-use proficiency of small, sparse MoE models on efficient hardware, which will justify these claims. We are also hoping to test and demonstrate efficient prompting and tool use frameworks to optimize for efficient token use.

Sparse Mixture of Experts Fine Tuning

Mixture of Experts (MoE) architecture has recently undergone a revival under the domain of Large Language Models (LLMs), and particularly sparse MoEs have emerged in mainstream popularity [16]. However, training and fine-tuning large-scale MoE models to specific domains and tasks have been widely documented to prove difficult, raising issues such as router collapse, training divergence, and routing inefficiencies [17], [18], [19], [20], [21].

Recent developments in fine tuning frameworks such as Unsloth directly aim to address sparse MoE fine-tuning [22], though stability is primarily achieved by simply freezing the router. Techniques such as selective PEFT expert tuning [23] have emerged. Large-scale testing and ablations for these techniques on modern MoE architectures, on capable edge hardware still require contribution and experimentation.

Prompt Engineering

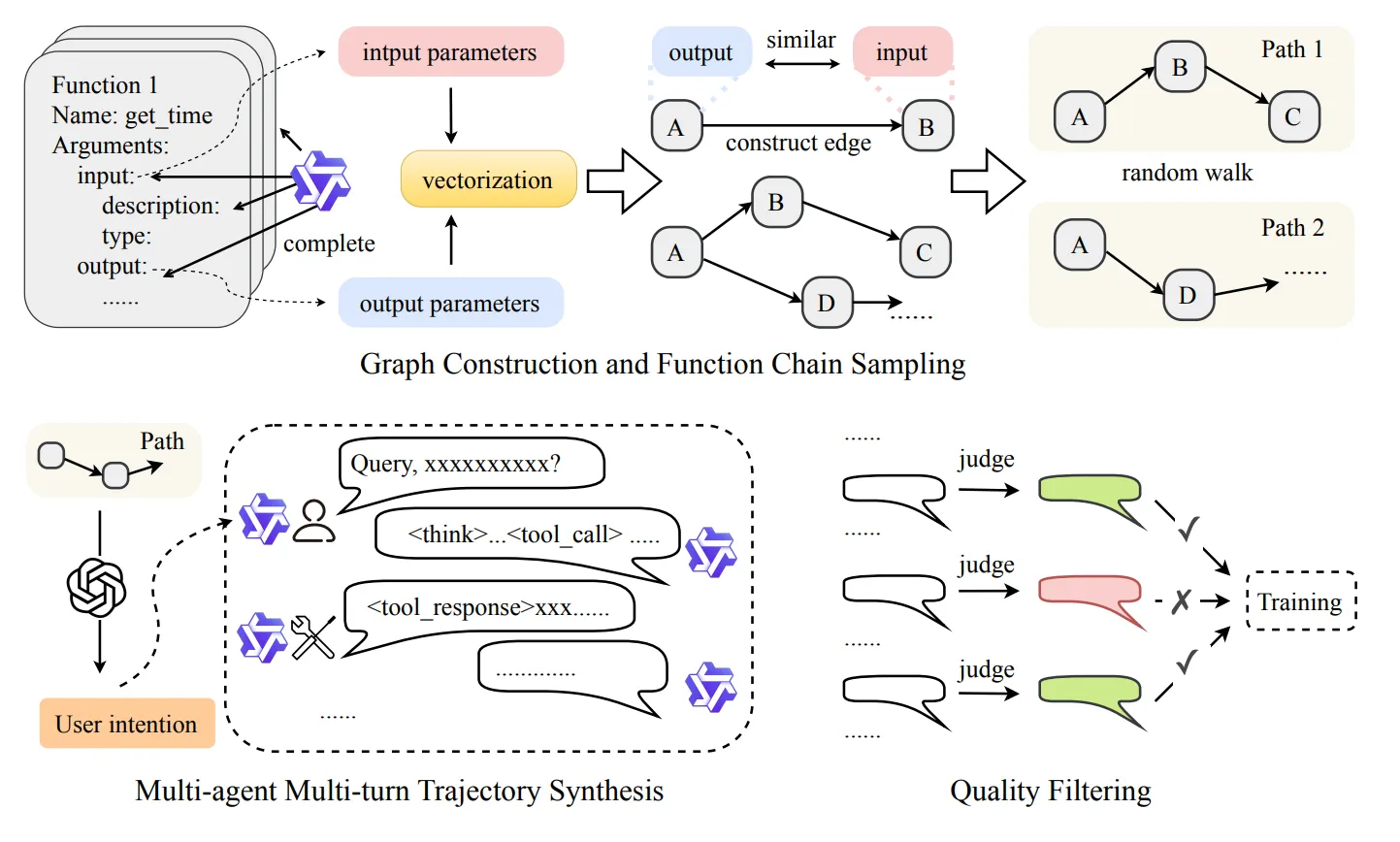

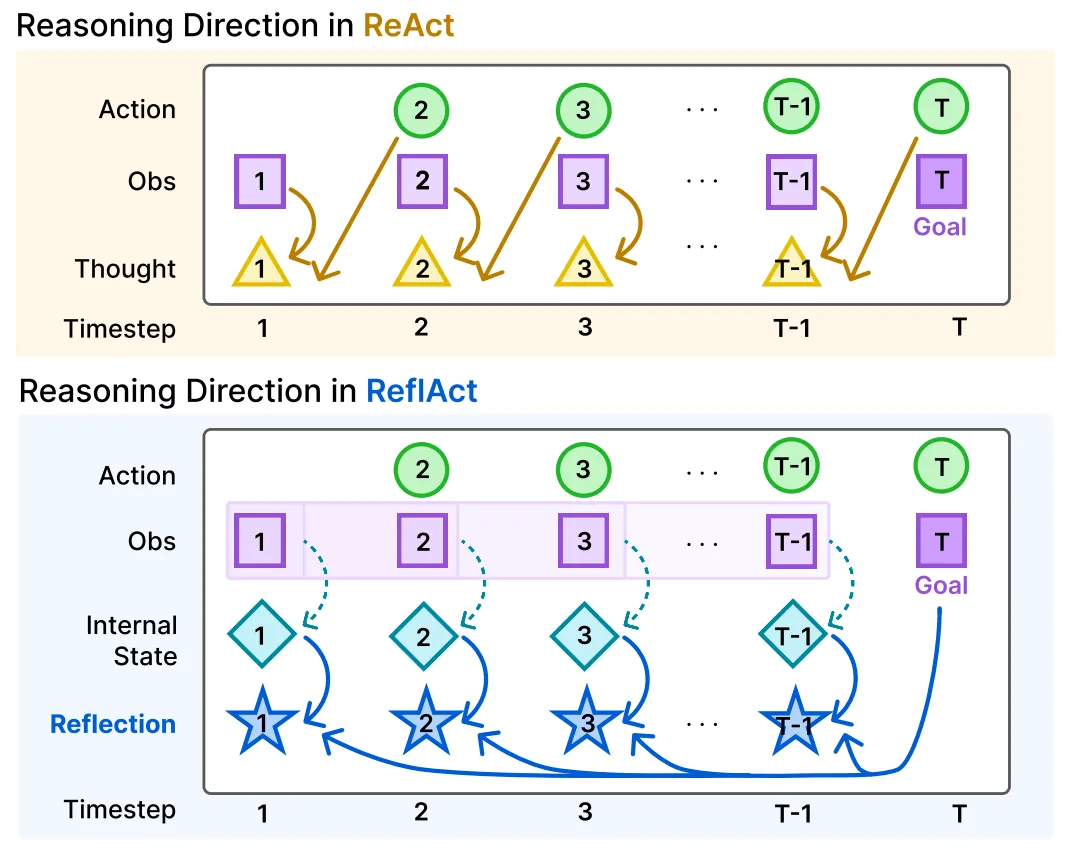

Prompt engineering in agentic tool-using cases have been documented to come in planning, tool-selecting, calling, and response generation workflow stages [24]. ReAct [25] was foundational in introducing a widely accepted paradigm for contextual engineering, but there is still ongoing research being done into alternatives such as ReflAct, which introduces reasoning on reflecting on the agent’s state relative to its goal [26]. However, we hope to optimize in our experiments not just accuracy and enhanced reasoning, but the efficiency of those reason tokens and reducing times to action and evocation.

MedReason [27] addresses efficient reasoning research on smaller models. By engineering Tree-of-Thought trees offline, and pruning for shortest-path reasonings, they created a high-quality reasoning dataset. By pruning for the shortest path, this allows for training on straightforward reasoning chains while still offering explainability [27], and still indirectly benefiting from the exploratory nature of ToT outputs. Synthetic Toolmind data also used a similarly-spirited approach, using offline chain-preparation. Random tool-walks are first generated, explained by an LLM agent role. These are presented to an LLM user-agent role that determines successful goal completion. A second LLM-judge evaluation prunes for coherent toolchaining paths and associated reasoning [27]. In one limitation Toolmind data is not particularly curated for the most straightforward or efficient tool-calling pathways, relying mainly on synthetic pass-fail judgements by the two LLM judges.

ToolMind makes tool calls using JSON [7]. There is a lack in experimentation with possibly superior tool-calling paradigms, such as Natural Language Tools [28], and Toon (Token-Oriented Object Notation) a notation that can use up to ~40% less tokens for the same JSON representation [29]. There is yet to be significant work demonstrating ablations on these techniques.

Hardware: AGX Orin

The AGX Orin is manufactured using the Ampere architecture [1]. This offers exceptional performance on INT8 and INT4 operations and quantization methods. However, it’s important to consider that FP4 is likely a superior alternative for future researchers [30], and that our main contribution lies in the data preparation for such superior consumer hardware. The AGX Orin is only capable of 275 FLOPs on its highest setting, and despite the 64GB high-memory availability, quantization is necessary for low-latency performance.

Technical Approach

Prompt Engineering

We intend to explore ablations on tool-calling techniques for our various models, including

In addition, we intend to test various agentic frameworks for tool calling, and perform comparisons for efficiency and benchmark accuracy:

In order to implement ReflAct, we can further synthesize ToolMind. Though the dataset contains valid tool-calling chains which are invaluable, we can further synthesize the dataset to include state-reflection. We can use superior frontier models to prepare state-awareness, and thus potentially yield the performance gains indicated by the ReflAct framework.

Fine-Tuning Techniques

Frameworks like Unsloth have developed to specialize in MoE fine-tuning, most recently to accelerate LoRA training times [22]. We will take advantage of these frameworks to help us conduct the fine-tuning process. However, most effort in quality tuning comes from ensuring clean and quality data representations.

Performance Evaluation

ToolMind itself was evaluated on BFCL [5], tau-bench [31], and tau2-bench [6]. We’ll be evaluating on the same benchmarks in order to establish consistent and comparable baselines our own developments.

We hope to construct these ablations specifically that will demonstrate performance on the AGX Orin:

Baseline Metrics Template

| Model | TTFT1 (m/s) | Prefill2 (tok/sec) | Decode3 (tok/sec) | Accuracy |

|---|---|---|---|---|

| x | x | x | x | x |

These will be carried out on 15W, 30W, 45W and 60W modes.