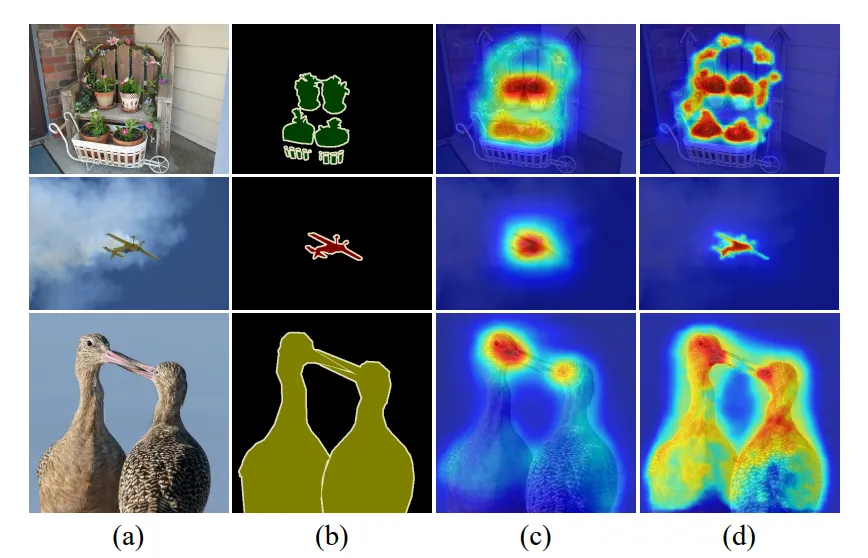

Discriminative Region Problem

When a standard classifier ends up only locating the most distinguishing features in order to output a confident classification, omitting other important traits.

Dynamic Masking Augmentation Techniques

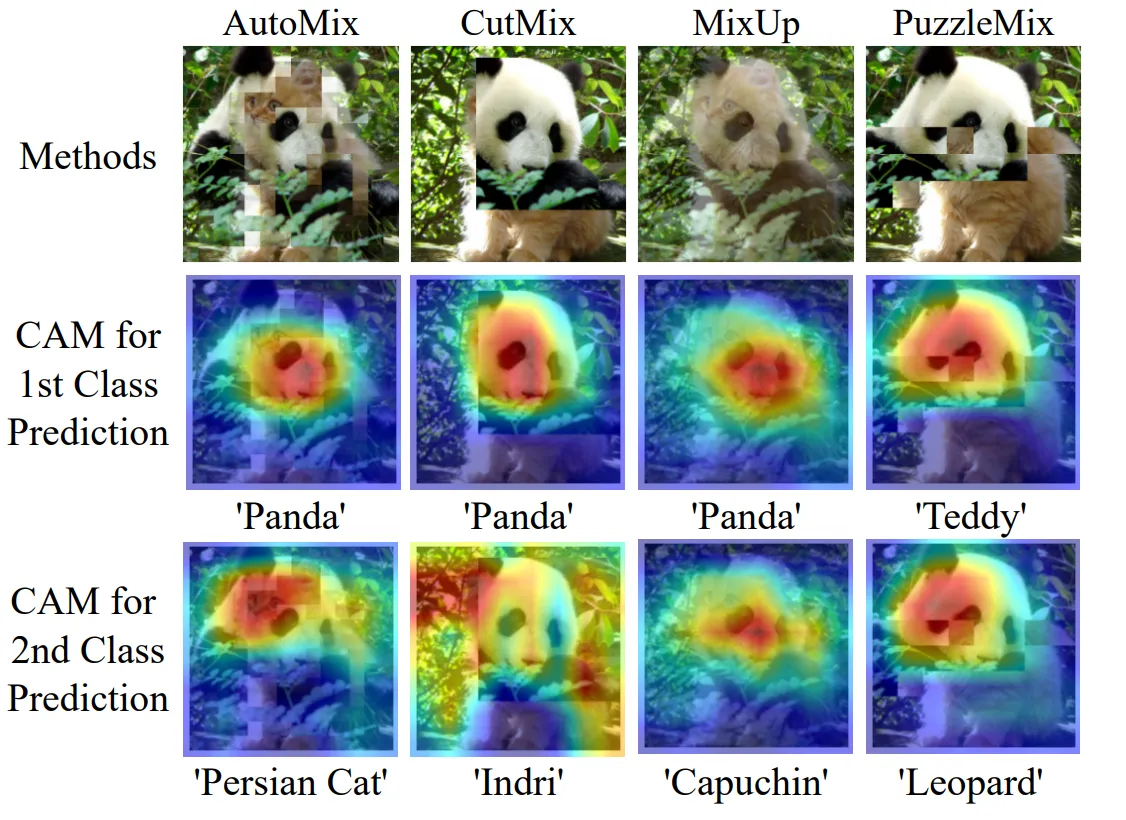

A limitation of the Park et al. formalization is that dynamic masking techniques, such as PuzzleMix [1] and AutoMix [2] cannot be formally explained by their framework, as their theorem relies on the mask being stochastic and independent of pixel values. When we add pixel-level awareness, we can no longer approximate loss function as a straightforward input gradient.

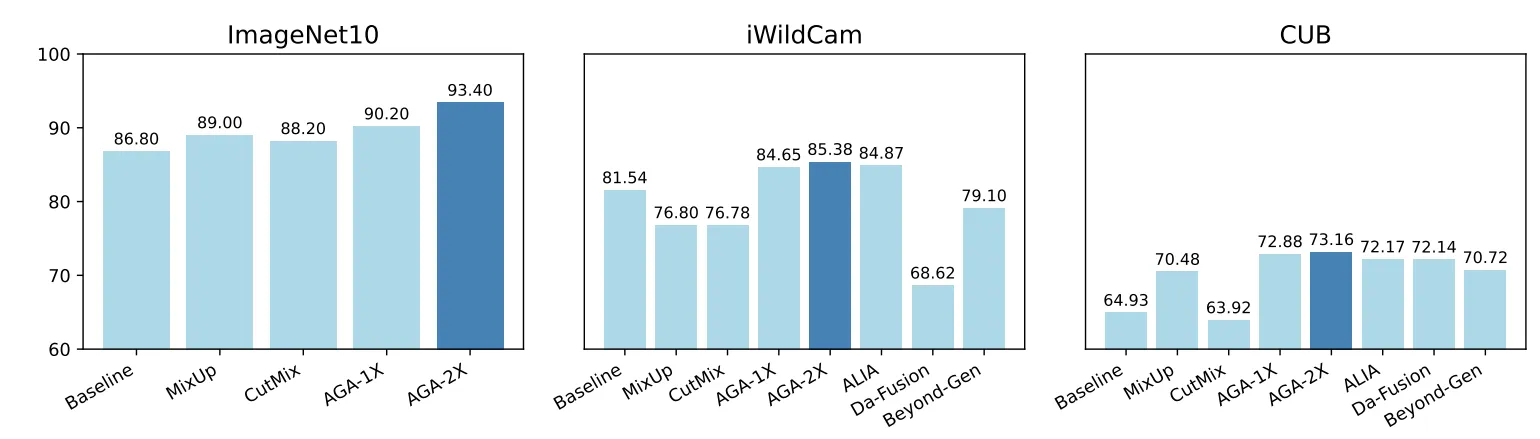

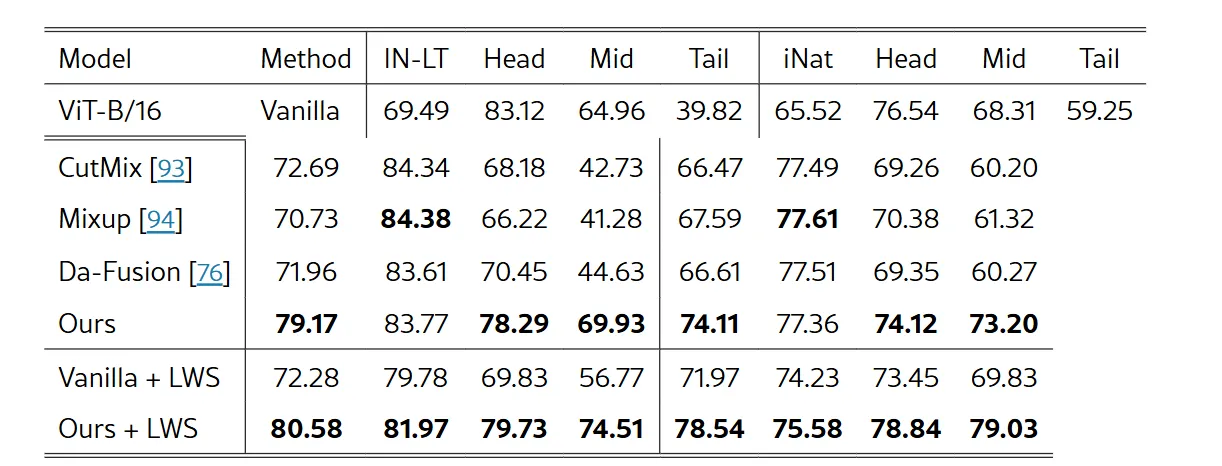

Empirically, though they achieve state-of-the-art results against MixUp and CutMix in selected setups, the overall impact on head class prediction is small. These techniques also take signficantly more compute per batch than stochastic techniques.

F1 score

Harmonic mean of Precision and Recall. We use it when we want a single metric that balances both, especially when we have an uneven class distribution.

False Positive Rate (FPR)

Generative Image Augmentation

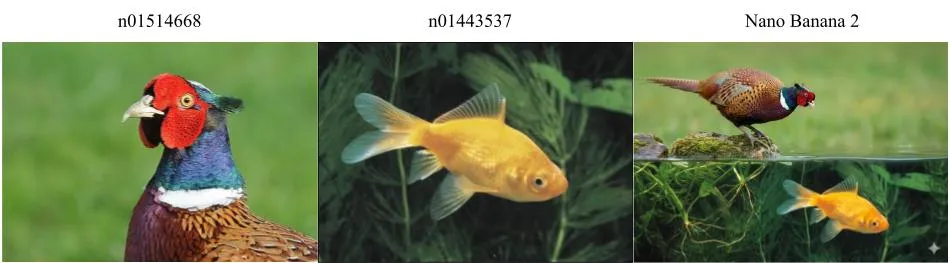

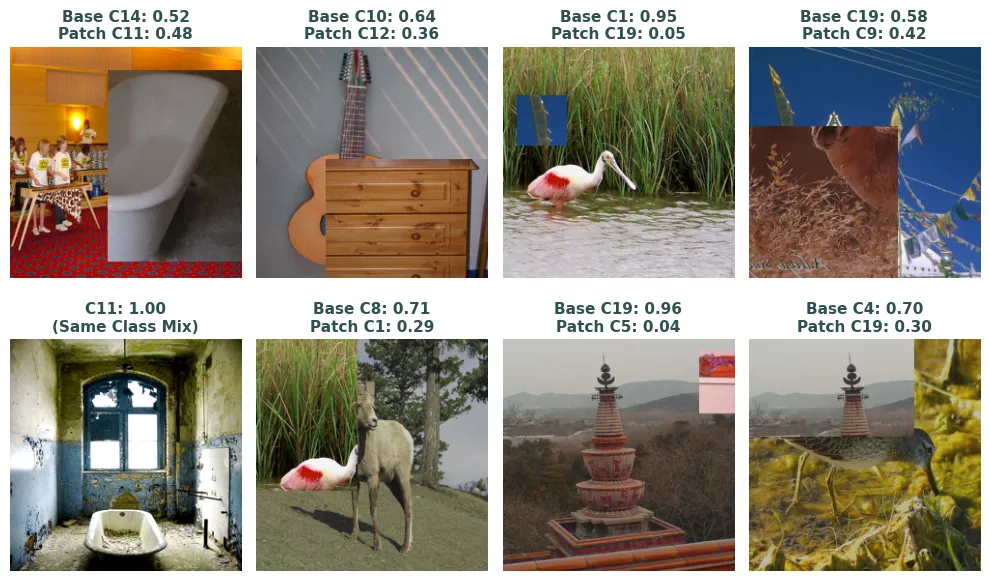

Earlier work has shown that using diffusion models to synthesize and augment data improves classification results on ImageNet [1], [2]. Given the latest developments in image generation models and abilities, these have moved to more sophisticated enhancements, such as background diversification, and saliency-aware generation pipelines [3], [4], [5]. Generally, these techniques isolate the ground-truth subject via segmentation or feature-extraction, and use the generative model as as an augmenter build the surrounding context and handle the blending.

ImageNet

ImageNet [1] is a large-scale visual dataset designed for object recognition research. It became foundational to modern computer vision, especially after the breakthrough of University of Toronto’s team in the 2012 ImageNet Large Scale Visual Recognition Challenge using AlexNet [2]. The original dataset includes 14 million images and 21,841 synsets (classes), though teams often evaluate on the ILSVRC Subset benchmark [3] consisting of 1.2 million training images and 1,000 classes.

The original ImageNet images were not exhaustively labeled. An image labeled “dog” might also contain trees, cars, or people. It is single-label per image. ILSVRC was essential for adding stricter quality control, as well as bounding box and object localization for more nuanced identification.

Jetson AGX Orin

Hardware from Nvidia.

L1 Regularization (Lasso)

A method of penalizing weights proportional to their L1 norm, the sum of their absolute values (Manhattan Distance). Pushes weights to zero, encourages sparsity.

Where:

- : The final objective function to be minimized.

- : The original loss function (e.g., MSE, Cross-Entropy) measuring the error on the training data.

- (Lambda): The regularization strength hyperparameter. Controls the trade-off between fitting the data and keeping weights small.

- : The L1 norm of the weight vector.

L2 Regularization (Ridge)

A method of penalizing weights proportional to their L1 norm, the sum of their absolute values (Manhattan Distance). Pushes weights to zero, encourages sparsity.

Where:

- : The final objective function to be minimized.

- : The original loss function (e.g., MSE, Cross-Entropy) measuring the error on the training data.

- (Lambda): The regularization strength hyperparameter. Controls the trade-off between fitting the data and keeping weights small.

- : The L1 norm of the weight vector.

Linear Attention

Faithful transformers implement softmax on attention layers. This introduces quadratic complexity, and there are modern techniques to approximate it and reduce complexity to linear time.

[1]

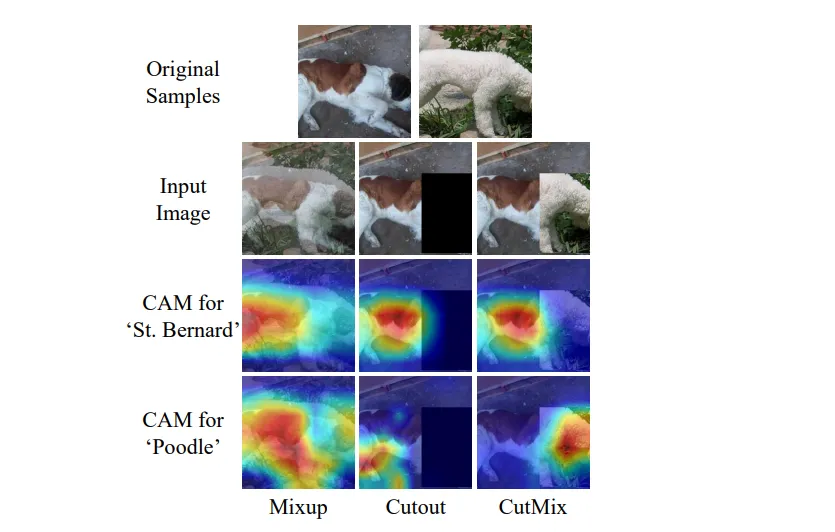

Mixed-Sample Data Augmentation

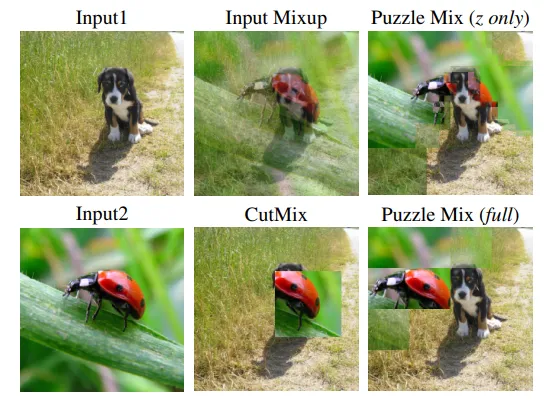

In 2022, Park et al. provided a unified formal treatment of mixed-sample data augmentation methods (MSDA) [1], demonstrating that such techniques were equivalent to designing a spatial decay kernel for gradient regularization, including CutMix [2] and MixUp [3]. Each technique posseses their own strengths and limitations. For example, CutMix may introduce multi-label noise, erasing a class that only existed in the cutout area, or introduces a new class that isn’t actually present in the pasted patch. There are some further static enhancements on this techniques, such as Fourier Mix (FMix)[4], which produces smooth and continuous patches instead of sharp ones, and ResizeMix [5], which resizes the patching image instead of cropping.

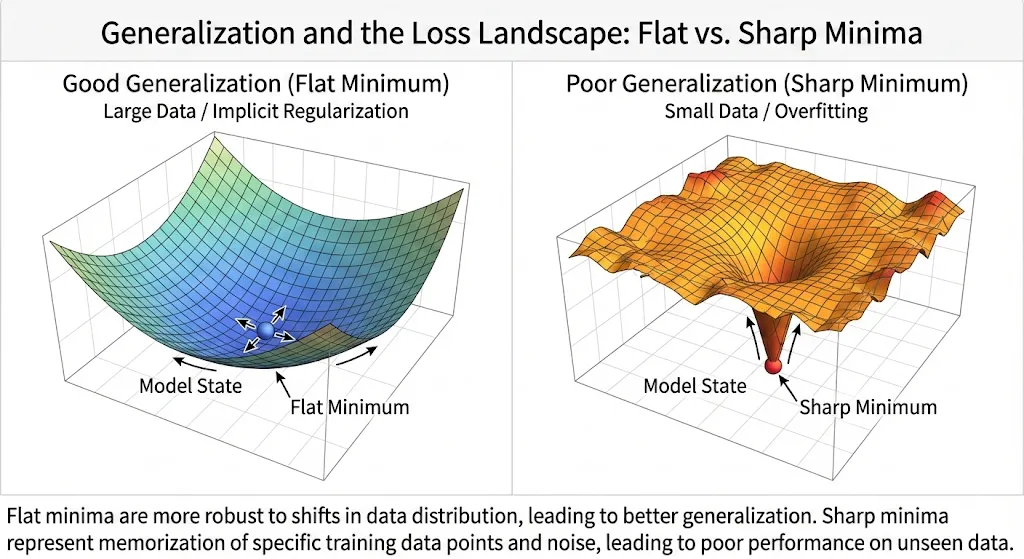

The Park et al. formalization demonstrates how MSDA reshapes the loss landscape, mathematically forcing it to learn smoother functions by penalizing erratic changes in its input gradients, weighted by how close the data points are (spatial decay). The core of the theorem relies on the stochastic nature of applicable image augmentation techniques. They used their theorems to formalize Hybrid Mix (HMix) and Gaussian Mix (GMix), intermediate spatial regularization techniques to sit between CutMix and MixUp distributions.

MSDA Regularization

Mixup blends images globally across all pixels, creating semi-transparent ghost images, hampering structural awareness. MixUp-CAM [1] is an introduced technique to use formalize uncertainty regularization constraints:

This formula combines a classification loss, class-wise entropy regularization, and spatial concentration loss to optimize the MixUp procedure, and prevent the response from being too divergent.

CutMix slready enforces spatial formalization through its geometry, such as feature exploration and spatial integrity. There is some research into techniques like semantic proportioning, making the augmentation differentiable, and using dynamic view-scales crops [2], [3], [4] to incrementally improve augmentations. These gains are incremental and selected, whereas the original CutMix usually suffices and is the common benchmark.

Poisson Distribution

A discrete probability distribution that expresses the probability of a given number of events occurring in a fixed interval of time or space if these events occur with a known constant mean rate and independently of the time since the last event.

The probability of observing exactly events is given by the formula:

Where:

- : The actual number of events we are interested in.

- : The average number of events per interval (the rate parameter).

- : Euler’s number (approximately 2.71828).

- : The factorial of .

precision (metric)

You’re fishing with a net. You use a wide net, and catch 80 of 100 total fish in a lake. That’s 80% recall. But you also get 80 rocks in your net. That means 50% precision, half of the net’s contents is junk. You could use a smaller net and target one pocket of the lake where there are lots of fish and no rocks, but you might only get 20 of the fish in order to get 0 rocks. That is 20% recall and 100% precision.

recall (metric)

You’re fishing with a net. You use a wide net, and catch 80 of 100 total fish in a lake. That’s 80% recall. But you also get 80 rocks in your net. That means 50% precision, half of the net’s contents is junk. You could use a smaller net and target one pocket of the lake where there are lots of fish and no rocks, but you might only get 20 of the fish in order to get 0 rocks. That is 20% recall and 100% precision.

Also just the True Positive Rate (TPR).

Regularization

300 samples per class is small, making Stochastic Gradient Descent (SGD) inherently difficult to land reliably. The sample complexity is just too low for SGD to reliably find flat, generalizable minima. We have to find a regularization strategy that helps us mitigate our limitations and prevent a sharp minima (overfitting). This forces us into a situation in which we must squeeze out every state-of-the-art regularization technique we can do to make the data as meaningful as possible.

SEAM

It has been demonstrated that standard CAMs fail to cover entire objects as they are translation and transformation invariant[1]. The localization and segmentation we seek require equivariance, where masks must alongside augmentation. Yude Wang et al. introduced Self-supervised Equivariant Attention Mechanism (SEAM) and Equivariant Cross Regularization (ECR) loss, taking the original image, generating its CAM, and then apply a spatial transformation . is then applied on the input, we yield the second CAM, and enforce on the loss that these two pathways yield the exact same spatial tensor:

By adding this to the standard cross-entropy loss, we force the network to produce stable object localizations rather than peaked activations that shift wildly when the image is augmented.

In the original implementation by Yude Wang et al., rotation and translation failed to produce sufficient supervision, while rescaling added major results ( to ).

Sparse mixture-of-expert models

Sparsely activated MoE Models.

WSOL

Weakly Supervised Object Localization (WSOL) is a task where we try to locate objects in an image using only image-level classification labels rather than detailed bounding boxes or masks.

WSSS

Weakly-Supervised Semantic Segmentation (WSSS) is a task where the data lacks pixel-level labels and masks, and the modeling must learn an alternative. Both of these domains apply to our problems, and there is significant literature available.