Bert-TweetEval: Natural Language Classification

PDF

Abstract

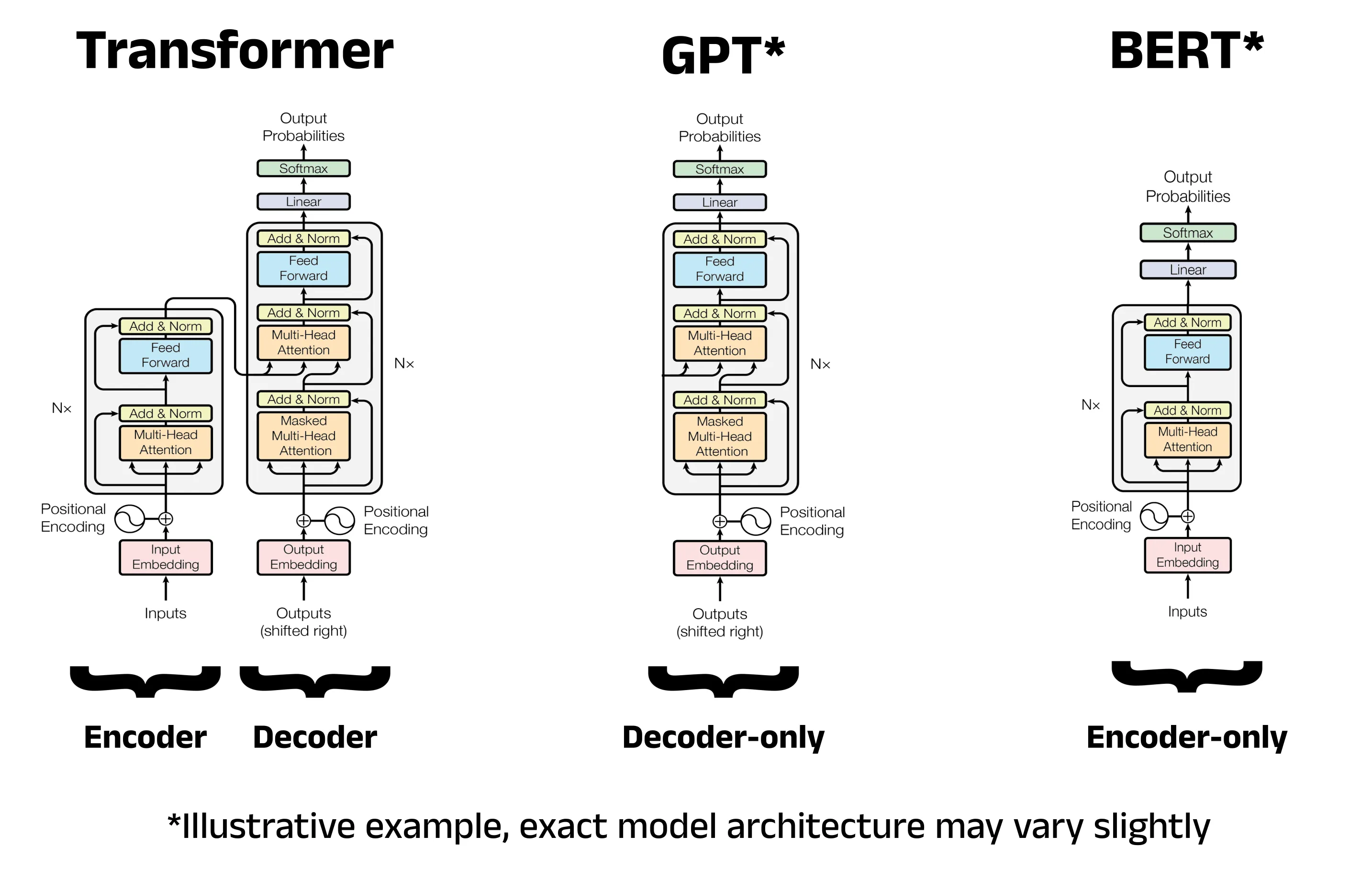

Extracting sentiment and intent from human natural language holds immense value in strategic decision-making in many domains. A variety of transformer architectures and base models have emerged as notable language processors, but they vary widely in training scale, vocabulary, and parametric counts. In real-world deployment, models can be hindered due to their cost and latency constraints. Models deployed to production are also subject to real-world stress cases, such as lexical diversity, unknown symbols and vocabulary, and dataset imbalance from the training data. In this work, we analyze lightweight variants of base and fine-tuned Bidirectional Encoder Representations from Transformers (BERT) models performance on the TweetEval emotion classification task. We compare and train DistilBERT and DistilRoBERTa variants and the suitability of their tokenizer architectures (WordPiece, BPE) for the emotion classification domain and their impact on performance. We construct a framework to stress-test distribution shifts and corrupted inputs, and conduct structured error analysis and interpret model confidence and calibration. We also benchmark additional competitive LLM models, Qwen3-4B-Instruct-2507 and GPT 4o-mini, under consistent prompting strategies on the same classification task. All code is released to the public at https://github.com/bencejdanko/bert-tweeteval. Models are released to the public at https://huggingface.co/bdanko.

Introduction and Related Work

TweetEval [1] consists of seven Twitter-specific classification tasks, including emoji prediction, emotion recognition, hate speech detection, irony detection, offensive language identification, sentiment analysis, and stance detection. TweetEval and BERT-variant combinations have already been extensively explored. BERTweet [2], a prior RoBERTa-based model trained on a corpus of 850 million English tweets, established the state-of-the-art (SOTA) baseline across most of TweetEval’s subtasks and proved the value in domain-specific pre-training, outperforming the original BERT and RoBERTa. TimeLMs [3] later introduced models continuously trained on fresh Twitter data, outperforming BERTweet in all TweetEval domains except irony detection. SuperTweetEval [4] has also been since released, adding several more NLP task domains that TweetEval lacked.

Task Description

In this work, we will be targeting emotion classification task from the original TweetEval. Each sample is labeled with one of 4 classes: “anger”, “sadness”, “joy”, or “optimism”. The dataset is heavily imbalanced, with an overrepresentation of “anger” classes at 42.98% of the dataset, and underrepresentation of “optimism” at 9.03%, while joy and sadness sit at 21.74% and 26.25% respectively. In total, we have a training and validation set of sizes 3257 and 374. We test our results on 1421 samples.

We compare models across these key metrics:

- Accuracy: Number of overall correct classifications over the validation sample.

- Macro F1: Macro F1 is the arithmetic mean of the F1 scores calculated for each individual class. It treats all classes equally, regardless of how many samples each class contains, meaning unbalanced classes are given the same weight:

- Precision: Number of true positives calculated over all classifications marked as positives. Measures the quality of a positive prediction. We are taking the macro average.

- Recall: The true positive rate. Out of all positive cases in the data, how many can the classifier identify. We are taking the macro average.

- ms/100 samples: Test of throughput. We test this by running evaluation inference in batches of 100 on all our models, except for GPT 4o-mini, in which we instead wall-clock for 100 async API requests (up to 20 concurrent).

- Expected Calibration Error (ECE): A measure of how well a model’s confidence aligns with its actual accuracy. If a model assigns a probability of 0.90 to a prediction, it should be correct 90% of the time. This tests for overconfident or underconfident models, and typically an ECE score of 0.01 or 0.02 is considered reliable.

To ensure reproducability, we set all configurable seeds to 15179996. No further data processing was done. For example, in future work, it would be possible to experiment with NLP augmentation techniques like Easy Data Augmentation (EDA) [5], back-translation[6], random masking or MixUp [7] and variants. However, these are currently out of scope for our tasks, so we will omit them. All local tests were done evaluated using the NVIDIA L4 GPU as hardware.

Summarized Results

Evaluation Reference

| Question | Location |

|---|---|

| Part A – Zero-Shot Baseline | Final Results at Table 2 |

| Part B – Fine-Tuning Transformers | Final Results at Table 2. |

| Training strategy located at Section 2. | |

| Loss curves and explanation at Appendix G | |

| Deployment Stress tests at Section 3 | |

| Part C – Error Analysis | Error Analysis at Section 4 |

| Part D – LLMs | Minimal Prompt at Appendix A |

| Strucured Prompt at Appendix B | |

| Final Results at Table 2 | |

| Prompt Explanation at Section 5 | |

| Other Requirements | Summary of hyperparameters at Section 2. |

| Final output table at Table 2 | |

| Training screenshots located at Appendix D | |

| Confusion matrices located at Appendix C |

In our studies, we reaffirmed that domain specific pre-training provides BERT models state of the art results on NLP classification tasks. We also demonstrate the arising competitive performance of open-source decoder models against closed-source.

Summarized Results from TweetEval Emotion Classification

| Model | Accuracy | Macro F | Macro Precision | Macro Recall | ms/100 samples | ECE |

|---|---|---|---|---|---|---|

| DistilBERT (WordPiece) | 0.083744 | 0.064219 | 0.384379 | 0.192035 | 312.318607 | 0.167510 |

| DistilRoBERTa (BPE) | 0.217452 | 0.155317 | 0.177579 | 0.200315 | 299.071175 | 0.056845 |

| bdanko/bert-tweeteval-distilbert | 0.79803 | 0.761196 | 0.767879 | 0.756296 | 0.0364 | |

| bdanko/bert-tweeteval-distilroberta | 0.788881 | 0.750644 | 0.799548 | 0.728545 | 0.0364 | |

| GPT-4o-mini (Minimal Prompt) | 0.800141 | 0.601466 | 0.653766 | 0.579754 | 5060.112734 | |

| GPT-4o-mini (Structured Prompt) | 0.821956 | 0.781499 | 0.791218 | 0.773544 | 3823.308211 | |

| Qwen3-4B-Instruct-2507 (Minimal Prompt) | 0.751583 | 0.584864 | 0.594974 | 0.581751 | 2571.471757 | |

| Qwen3-4B-Instruct-2507 (Structured Prompt) | 0.812104 | 0.758003 | 0.793805 | 0.741873 | 4931.231370 |

Baseline Analysis

On our baseline analysis, we found that the original DistilBert and DistilRoBERTa models severely underperformed on the TweetEval dataset. From Figure fig:distilbert-base and Figure fig:distilroberta-base, we see an extreme bias towards particular classifications and distributions that do not match our dataset and domain.

Training Strategy

On both models, we initialize for 20 training epochs, a batch size of 16, AdamW optimization using a learning rate of 2e-5 and weight decay of 0.01. We employ EarlyStoppingCallback, early stopping based on the Macro F1 score on the validation set, and then select for the model with the best Macro F1 score after 3 failed improvements (patience of 3). We choose early stopping as our models experimentally overfit very easily, and we select Macro F1 as our stopping metric as it weighs each class equally, which is particularly important due to our imbalanced dataset.

Corruption Stress Testing

To simulate data-corruption stress tests, we randomly introduce typos, hashtag splitting, and emoji removal.

- Typos: We randomly swap, delete, or insert characters into words with chance . This tests the model robustness against misspelled but recognizable words.

- Split Hashtags: We identify hashtags and split CamelCase words or remove the hashtag. This tests if the model relies on the hashtag or underlying semantic content better.

- Remove Emoji: Emojis strongly indicate emotion, and removing them tests if the model is robust enough to understand the other semantic cues.

We’ll also conduct domain shift simulation. We create a shift by filtering tweets without mentions, links, or hashtags and compare performance.

- Mentions: We compare performance once we strip all @user mentions from the evaluation.

- Links: We compare performance once we strip all http links.

- Hashtags: We compare performance once we strip all hashtags.

Corruption Ablations

| Dataset Shift / Corruption | Accuracy (DistilBERT) | ECE (DistilBERT) | Macro F1 (DistilBERT) | Macro Precision (DistilBERT) | Macro Recall (DistilBERT) | Accuracy (DistilRoBERTa) | ECE (DistilRoBERTa) | Macro F1 (DistilRoBERTa) | Macro Precision (DistilRoBERTa) | Macro Recall (DistilRoBERTa) |

|---|---|---|---|---|---|---|---|---|---|---|

| All corruptions | 0.777621 | 0.186762 | 0.731208 | 0.748215 | 0.719606 | 0.769880 | 0.027936 | 0.729321 | 0.785784 | 0.704836 |

| Emoji Removal | 0.796622 | 0.169403 | 0.759471 | 0.765310 | 0.754525 | 0.788177 | 0.044574 | 0.754264 | 0.799723 | 0.730673 |

| Hashtag Splitting | 0.797326 | 0.169666 | 0.758076 | 0.767174 | 0.751265 | 0.790289 | 0.049607 | 0.750437 | 0.804039 | 0.727826 |

| Typos | 0.774806 | 0.192172 | 0.735679 | 0.750336 | 0.726397 | 0.762139 | 0.032455 | 0.719843 | 0.767177 | 0.700259 |

| Baseline | 0.798030 | 0.169843 | 0.761196 | 0.767879 | 0.756296 | 0.788881 | 0.042115 | 0.750644 | 0.799548 | 0.728545 |

| All Domain Shifts | 0.798030 | 0.169843 | 0.761196 | 0.767879 | 0.756296 | 0.788881 | 0.042115 | 0.750644 | 0.799548 | 0.728545 |

| No Hashtags | 0.779412 | 0.183594 | 0.729214 | 0.740608 | 0.721849 | 0.783422 | 0.055099 | 0.730822 | 0.795115 | 0.706223 |

| No HTTP Links | 0.798030 | 0.169843 | 0.761196 | 0.767879 | 0.756296 | 0.788881 | 0.042115 | 0.750644 | 0.799548 | 0.728545 |

| No @ Mentions | 0.786865 | 0.178595 | 0.764952 | 0.763810 | 0.766616 | 0.788104 | 0.041246 | 0.763955 | 0.796607 | 0.749939 |

Error Analysis

8 Missclassified samples from both models can be seen in Appendix F. We can see how misspellings, such as “Deppression”, cause unrecognizable token fragments ’#’, ‘de’, ‘##pp’, ‘##ress’, ‘##ion’. These can’t be clearly mapped to any particular emotional state, and may be unrecognizable from the pre-trained vocabulary.

For misspellings, we would need to implement character-level data augmentation to simulate typos. We would inject random character insertions, deletions, and keyboard-distance typos, especially targetting emotional keywords. By forcing the model to see misspelled variants, we train the attention heads to recognize the pattern of the fragments. This may improve the score.

Other missclassified sentences have words that seem semantically biased for one class, where subtle, but important tokens change the meaning significantly. For example, both tokenizers correctly break down “revolting”, but neither model can weigh “i am” enough to overcome the “angry” connotations and classifications. Thus “i am revolting” is missclassified as “angry”.

In order to counteract this, we need to increase our training samples or introduce more robust data augmentation to increase the semantic representation and understanding for our models. The best technique in this case would be Counterfactual data augmentation (CDA), where we generate more samples consisting of the word “revolting” in all scenarios, and thus we can diversify the model’s interpretation of “revolting” across a greater number of samples and classes.

Open Source and Closed Models

We evaluated two LLM models, Qwen3-4B-Instruct-2507 and GPT 4o-mini, on prompts from Appendix A and Appendix B. They achieved SOTA results without manual tuning, and responses aligned with the distribution of the training data.

A structured result demonstrated greater performance in all metrics over minimal prompts, except on throughput for Qwen. Longer prompts tap further into the parametric memory of models, they can elicit the pre-trained memory to produce more aligned responses. Our longer prompt thus increased the statistical chances of the model producing accurate emotion-classification assessment. Without such context priming, the model is not parametrically activated in the same specialized manner, and thus was more unlikely to produce an aligned response.

The final results for each tested model has been collected and summarized in Table 2.