SJSU x Mobilint: Neural Processing Units, and the MLA-100, an accelerated AI chip

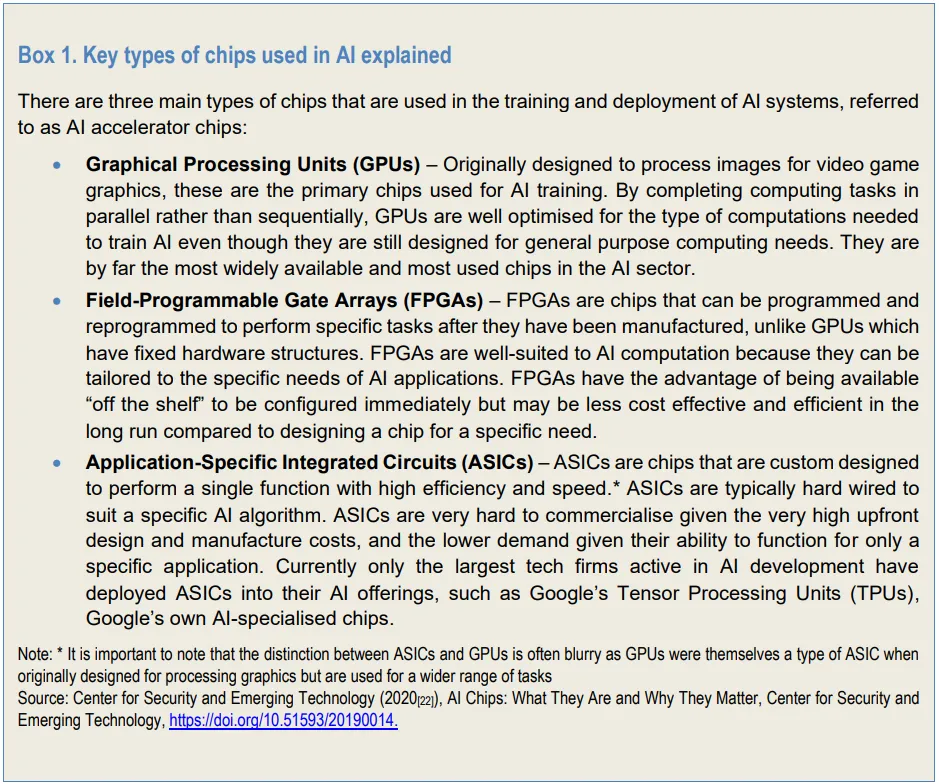

Neural Processing Units (NPUs) are specialized hardware accelerators, a specific application of Application-Specific Integrated Circuits (ASICs) engineered specifically to handle the heavy matrix and tensor mathematics required for artificial intelligence and machine learning. While CPUs are built for general-purpose tasks and GPUs are traditionally used for parallel graphics rendering or training massive AI models, NPUs are optimized for inference, the process of running already-trained AI models efficiently.

The competitive landscape

Currently, only the largest technology companies active in AI development (such as Google, Amazon, Microsoft, and Meta) have successfully deployed custom ASICs for their AI offerings. These dedicated chips are typically not sold on the open market; rather, they are available exclusively through the respective firms’ own cloud computing services and are tailored for specific use cases.

While several smaller startups are attempting to manufacture specialist AI chips, none have managed to secure a significant market share to date. Dedicated chips are exceptionally difficult to commercialize due to the massive initial investments required for design and manufacturing. Because ASICs are custom designed and hard-wired to execute a specific function or AI algorithm, their overall market demand is inherently lower than that of versatile, general-purpose chips like GPUs.

The future of AI growth

Inference workloads are generally less complex than model training, prioritizing speed, cost, and energy efficiency. The pressure to optimize inference has become especially important as tokens per watt become a defining metric for performance, alongside achieved utilization. Custom-designing ASICs can significantly improve the efficiency of AI compute, allowing operators to reduce their reliance on power-heavy general AI chips. While Nvidia maintains a dominant market position over the chips used for the training phase of AI, competition authorities suggest that the inference phase may foster a more competitive landscape in the long term. The emergence of new, smaller AI models may also shift overall compute access needs, potentially altering the market dynamics and creating new opportunities for these dedicated chips.

Startups like Taalas are taking an extreme approach by sacrificing flexibility entirely for the sake of raw speed and economics. By hardwiring an entire foundation model directly onto a chip, Taalas removes almost all programmability. In the case of the Taalas HC1 chip, the only programmable element is a small SRAM used to store fine-tuned weights and the Key-Value (KV) cache. This zero-margin-for-error specialization yields performance metrics that general-purpose GPUs currently cannot match. By hard-programming a heavily optimized Llama 3.1 Instruct 8B model, they can achieve 16,000 tokens per seconds, producing about 40–48 book pages per second. Comparatively, xAI’s Grok provider, known to use Nvidia Blackwell architecture, has been benchmarked to average about 600 tokens per second.

Another startup, Cerebrais AI, recently partnered with OpenAI to develop custom architecture and inference for their GPT-5.3-Codex-Spark model. It is claimed to operate at about 1,000 tokens per second, and is a significantly more powerful model then Llama 3.1. In the near future, Extropic AI is soon projected to release it’s own hyper-efficient, thermodynamic chip that is architecturally alien, but theoretically delivers 10,000x increases in generative efficiency over GPUs. These trends indicate a spur in parallel development of novel generative models and ASIC chips that are designed to run them natively.

Industry demand for dedicated ASICs is projected to increase and diversify as the AI market shifts its focus toward inference. While Nvidia maintains a dominant market position over the chips used for the training phase of AI, the presented trends suggest that the inference phase may foster a more competitive landscape in the long term. While Nvidia has earned it’s free lunch by creating the industry-standard developer tooling for GPUs, the future of growth may lay in companies that can streamline not only model development, but hyper-accelerated, dedicated ASIC chip ecosystems that can run them efficiently.

Mobilint

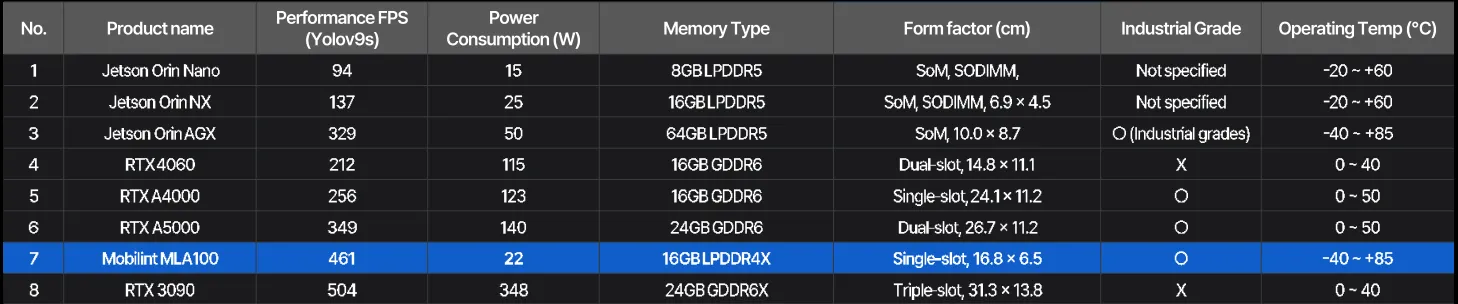

Mobilint is an an NPU chip designer, partnered with SEMIFIVE, to manufacture chips based on Mobilint’s ARIES and REGULUS architecture. At 14 nanometers, SEMIFIVE’s hardware implementation is meant to deliver Mobilint’s design at cost effeciency. Their flagship product, the MLA-100, is a highly efficient PCIe AI accelerator card that delivers an impressive 80 TOPS (Tera Operations Per Second), and 66.7 GB/s memory bandwidth at a minimal 25-watt power draw. It is designed to run hundreds of advanced deep learning models with high accuracy.

San José State University’s Applied Data Intelligence Department has invested into several MLA-100 chips and made them a core part of their artificial-intelligence program, allowing students to utilize the latest industry hardware to build, deploy, and optimize real-world generative AI and machine learning systems. This targeted hardware investment gives students the practical experience needed to deploy scalable AI solutions and lead in Silicon Valley’s rapidly evolving tech landscape.

Getting started

Mobilint has prepared example implementations in their Model Zoo on Github. These are a curated collection of AI models optimized for their architecture. Their collection shows demonstrations of classical vision models and Transformers.

However, the NPU does not support the core self-attention operations and mechanisms of Transformers, offloading work to the CPU. Transformer implementations are implemented using graph patching and CPU offloading via Hugging Face Transformers integration. These implementations are configured such that the NPU handles static linear projections and convolutions, while operations that the NPU cannot natively support are left on the host CPU and executed using standard PyTorch/Transformers code.

It’s difficult to find a definitive list of supported operations, and it’s also difficult to discern what operations are technically accepted by the compiler, but still use CPU fallback to support their implementation. I’ve prepared an unofficial list of operations, on the v0.12.0.0 qb compiler, tested independently, available here.

Preparing the Compiler

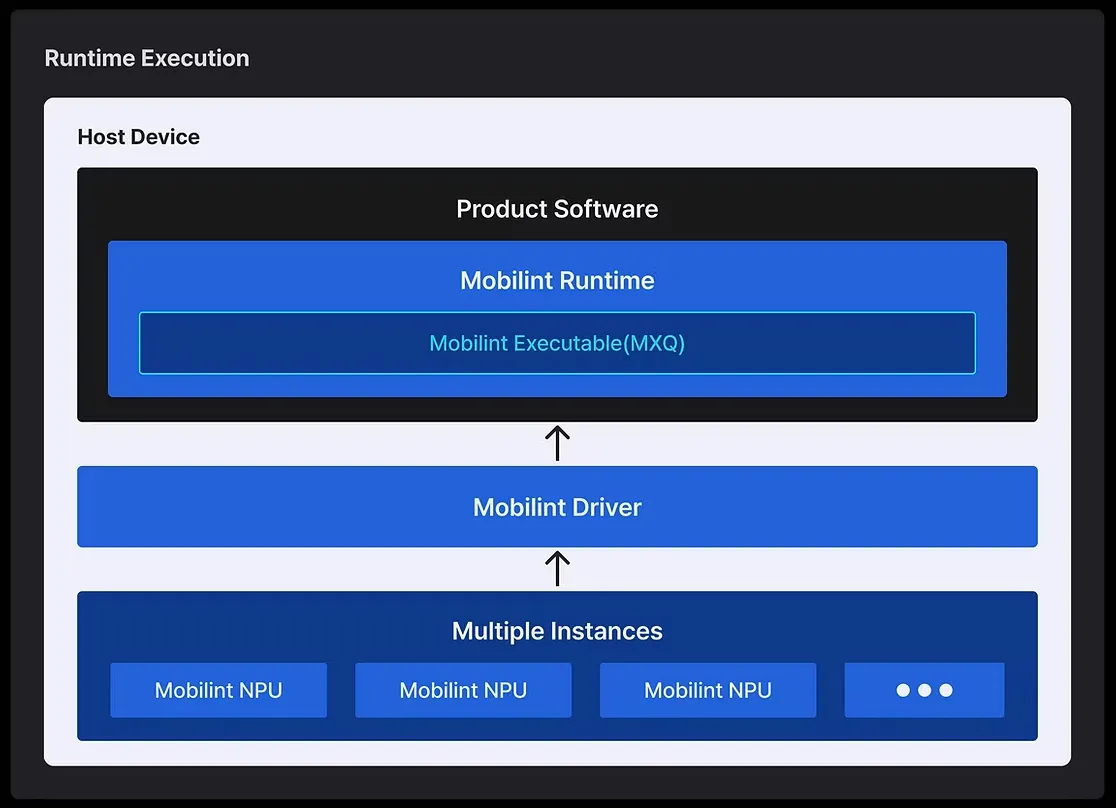

To run on the MLA100, Your model must be compiled to the ARIES-specific Mobilint Executable (MXQ). This the compiler can be accessed via their SDK (you must be partnered with Mobilint and have an account in order to download the qbcompiler). You need to install this compiler into their prepared docker enviroments, in which you can find the collection from Mobilint’s releases on Docker Hub.

To better visualize you model and the compatibility, use the Netron project.

Inference